Let the Spectacle Astound You

Note: This article originally appeared in the January 2026 issue of Live Sound International under a different title.

“Forget everything about how you would typically do this, and then let’s figure out how we are going to do it.” This was theatrical sound designer Brett Jarvis’s instruction to his audio team when they began work on the sound design for Masquerade, a cutting-edge, immersive adaptation of Andrew Lloyd Webber’s Phantom of the Opera, directed by Diane Paulus.

The technology underpinning Phantom on Broadway remained essentially unchanged throughout its record-setting 35-year run, so modern audiences were often unaware that the show’s dated tech was considered boundary-pushing at its 1988 debut. When the show closed in 2023, plans were underway for a refreshed re-staging that would again incorporate the latest technical theater innovations into an engaging, modern format.

Jarvis was well qualified for the job. Since the late 2000s, he has worked almost exclusively on “reactive” theater performances, which don’t follow any preset order or structure. He knew he needed show control technology that was able to keep up, so he partnered with long-time friend and colleague Sean Beach to create an application called ShowPulse.

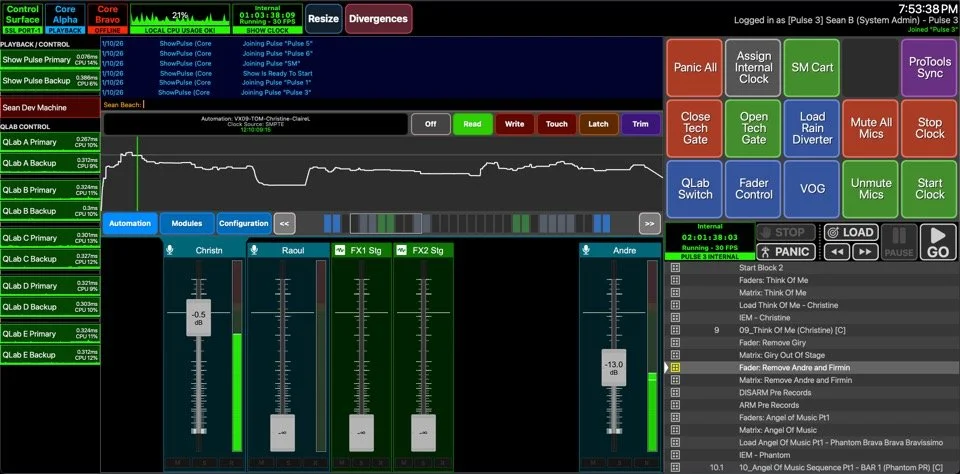

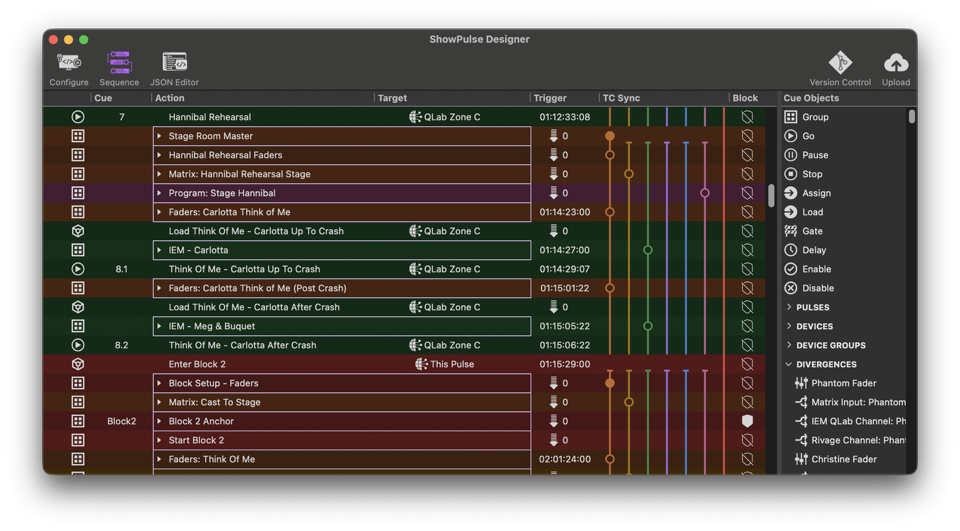

ShowPulse acts as a master control platform for immersive musical theater productions, able to coordinate elements such as mixing consoles and playback software via an extensive and dynamically adjustable set of parameters and instructions, a “digital conductor” of sorts. The program can seamlessly jump between different parts of a show in real time and can even run multiple instances of the production simultaneously without interfering with each other.

Direct Connection

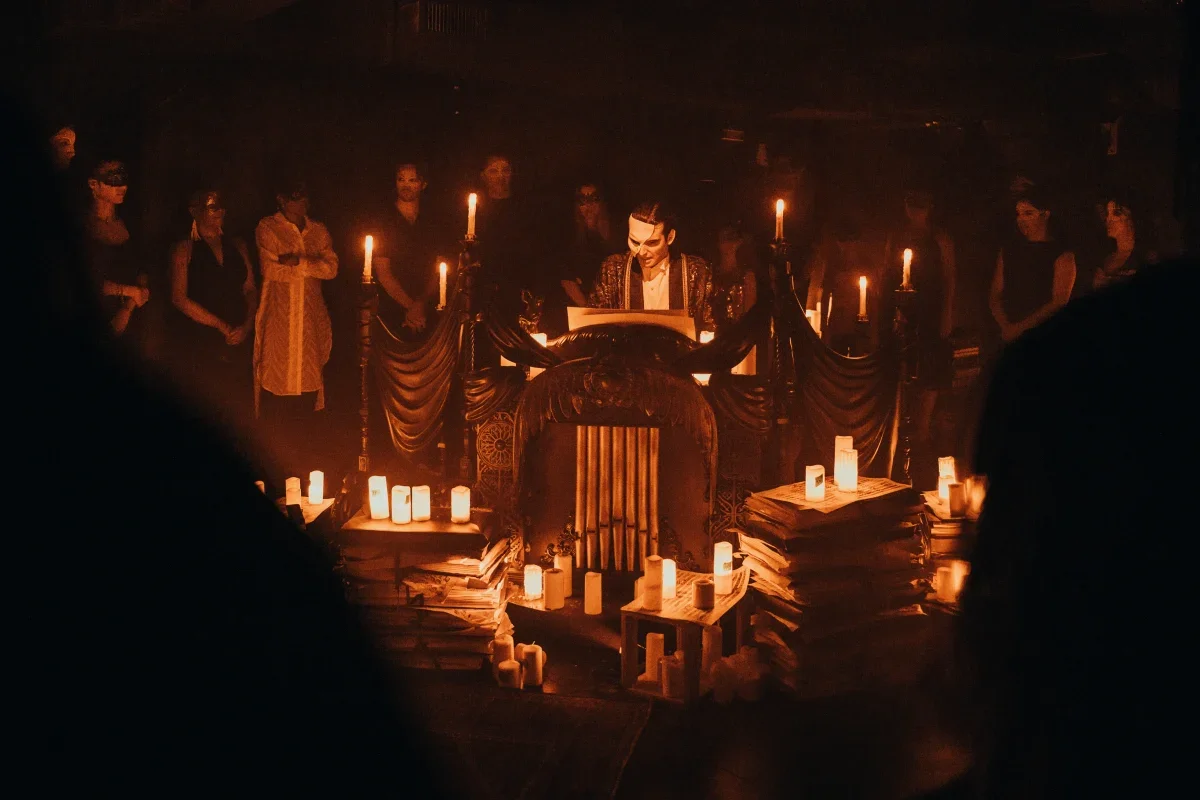

As opposed to viewers sitting in seats and watching the action unfold on stage, Masquerade physically places the audience members in the middle of the story unfolding around them, even directly interacting with the show’s actors. Audiences are divided into groups of 60 people, and each group – called a pulse – moves independently through the show experience, which takes place in a renovated art supply shop on 57th Street in New York City.

During the two-hour performance, each pulse follows their dedicated cast of performers along a twisting, labyrinthine path that spans all five floors of the building (and the rooftop), through the elaborate sets and environments of the Paris Opera House as the story unfolds.

Sprinkled throughout the performance are dozens of off-the-cuff, one-on-one interactions with the characters. A scene early in the show places the audience center stage during the bustling chaos of a dress rehearsal on the stage of the opera house.

“Can you believe I had to donate that, just to get an invitation?” the actor playing wealthy opera patron Raoul de Chagny said to me as he pushed past, motioning to the massive chandelier overhead. “Although I do think it’s a bit small for the room.”

At the same time, audience members on the other side of the room were experiencing a different perspective on the action, along with different exchanges with different cast members before a musical number commenced.

Under The Hood

No two audience experiences are the same, which raises some interesting challenges for the production. The behind-the-scenes logistics are extremely complex, even bordering on “intimidating.”

A show run involves six pulses, staggered by 15 minutes. Each pulse has a dedicated cast of actors wearing earset mics and in-ear monitors, who move continuously across all six levels, and must be reinforced through different loudspeaker systems in each room. Since there’s no orchestra conductor, all performers need to hear clicks and count-ins to keep the performance coordinated. All of this must happen for all six audience pulses snaking their way through the building at the same time, with some performance spaces being visited multiple times per pulse.

As soon as he started work on Masquerade in 2023, Jarvis knew the ShowPulse platform would be the technical key to enabling the vision of the show by controlling and interfacing the various components, including a Yamaha Rivage DSP engine and three Sennheiser Spectera wireless systems for microphones and IEMs. (Jarvis expressed deep gratitude to the manufacturer for its involvement to support the massive channel counts required.)

To keep everything in lockstep, the production makes extensive use of timecode. “Because of the layout of the building, and the way that the architecture of the show demanded we use the building, the audience flow is non-linear, we use rooms multiple times and so we have to rely on timecode very heavily,” Jarvis explains.

Andrew Lloyd Weber’s longtime collaborator Lee McCutcheon produced all the music and audio content for the show, while his background in film and theater enabled him to develop a multi-timecode map of the show run. According to McCutcheon, who has worked with Lloyd Webber on many recordings and movies of Phantom over the years, this totally new style of experience required a fresh musical approach, with a seamless, carefully controlled experience across audience groups. McCutcheon worked closely with Jarvis to develop the overall sound from the show, describing their collaboration as “magic.”

“We don’t have any people mixing live, no conductors, no music person triggering cues. But it feels completely spontaneous and you can hear every one of the 28 live actors’ mics across all six audience groups clearly without one line dropped. I’d say that is pretty incredible” said McCutcheon about Show Pulse.

Jarvis explained to me that the key to achieving this was the precise programming that handles all the matrixing in the Biamp Tesira DSP to make sure the right mics are popping up at the right times, in the right places.

Besides a global timecode clock, there are six pulse-specific clocks for cueing tracks and events, and 24 geographic timecode zones that are baked into specific audio tracks to drive lighting and fader movements. ShowPulse handles all the matrix changes for the performers as they move through the rooms.

Though having more than 30 timecode streams running at once may sound chaotic, it’s a more efficient and flexible approach, explains Beach: “The benefit of a geographic timecode zone is that since a song will occur in some area at least six times, we are able to reuse the same code over and over, making programming more manageable.”

The building is divided into five geographic zones, each of which is served by a redundant pair of QLab Mac Mini devices sending 128 channels of show audio via AVB for playback, plus an additional 64 channels of utility audio for IEMs and timecode. (An additional two redundant machines run ShowPulse software itself.)

All those signals are fed into an array of Biamp Tesira Server DSPs which form a giant matrix, distributing audio signals to the amplifier rooms on each floor. From there, Jarvis estimates that they used close to 10 miles of loudspeaker cable to drive more than 1,000 loudspeakers spread throughout the building.

“The Tesira is not new technology, by any means, but in my opinion, when you approach something that is inherently this complicated, any problem you can solve with simple, old-school, Winchester technology is to your benefit,” he says. “Because every time we do one of these productions, we have to do some things that we haven’t done before, or that no one has done before, and we need to be able to focus on that.”

Jarvis credits systems integrator Stephan Wojtecki and production audio Mike Wojchik with navigating all the challenges that arose in the tech process.

Nailing The Mix

“There is no control surface for the Rivage,” explains Jarvis, who mixed performances by each cast during tech rehearsals on a Solid Stage Logic UF8 DAW controller. “ShowPulse is the control surface. The Rivage is in a rack somewhere and we connect to it from the ShowPulse operator station, which can be anywhere in the building. That’s what we did during tech and previews – I could camp out in a room and mix each cast as they came in, so everyone has their own fader moves, EQ, and dynamics. And then the system plays back that control data, with some tweaks that we add in on a nightly basis.”

ShowPulse’s Divergences feature allows the operator to select the actors playing each role in every pulse, and then recalls the proper fader moves, equalization, dynamics and effects parameters for each individual performer. During the show run, all these parameters are communicated to the Rivage mix engine in real time via OSC. A sophisticated combination of feedback loops, including signal level meter data and an SPL microphone in each room, is used to dynamically adjust the mix parameters on the fly.

During the show, A1 Alan Busch monitors everything from the control room and will manually adjust as needed. A2 Tiel Starzynski is also standing by to address any issues that require physical access to the actors’ packs. The software also dynamically manages the QLab stacks for all the pre-recorded content in the show, which is broken down by actor and role, so that any triggered voiceover elements properly correspond with the actor currently playing that role.

On top of all that, ShowPulse automatically switches between several hundred discretely mounted IP cameras as each pulse progresses, providing tailored views of the action to the control room, and the stage managers on each floor. (The cameras pointed at the chandelier are hard-wired and latency free, to ensure the necessary precision in monitoring its motion.) For safety purposes, the motion of the chandelier, along with all the other automation elements in the show, is monitored and controlled by discretely placed crew members in each room with direct line of sight.

“We looked and looked, and to my knowledge there’s still nothing out there that can handle this complexity in such a graceful way,” explains Jarvis. “There are other ways to do it, and we’ve explored those, but this platform takes away that layer of abstraction or complexity, and gives us the speed and repeatability that we really need.”

ShowPulse chief architect Sean Beach elaborates: “The nature of this production is just so extremely complicated. There are several layers of virtual matrices to get the right signals to the right place and support failover. We have fire alarm interlocks to mute the sound system if the alarms go off, but certain signal routes must remain open for announcements.

A look at the ShowPulse cue stack

“There were days where we just moved 60 people around the building to understand timings. At one point we were even coordinating with the HVAC system, because that equipment is on the roof, and there’s songs up there that we need to hear. Every single department had to work together and adapt, and the final product was coordinated down to the thirtieth of a second. We needed to be able to quickly grab a single performer’s mic, or an entire room, or an entire floor, and make changes, without spending a ton of time programming; ShowPulse is the tool that lets us do that.”

The production’s setting posed additional challenges: extensive soundproofing was required to minimize the bleed of sound between adjacent performance areas being used by different pulses simultaneously. Jarvis found that the small-format array-style loudspeakers made by LD Systems were extremely helpful in maintaining the directivity of the sound within the show’s small spaces, which worked in conjunction with the sound treatment.

Also, the dense RF environment of Manhattan required special accommodations to effectively turn the upper floors into a Faraday cage in order to give the show’s RF consultant, Cameron Stuckey, as much available spectrum as possible.

Beach provided an additional example of the flexibility that was required to adapt to the evolving needs of the production: “They needed a way to rehearse in different locations, isolated from the rest of the building, because you have other departments in other spaces working on other things. One of the major problems we had to solve was, how do we begin from bar 16 and keep everything tracking and triggering properly?

“We built a rehearsal computer on a tech cart that has the whole show in Pro Tools with time code, and a time code reader. They could roll around with a little speaker and feed timecode in from any location and do a rehearsal. We can also feed that rehearsal timecode into the Dante network, so we can chase it from ShowPulse, and it’ll realign the entire show as they scrub around and can start from wherever they want.”

Matthew Murphy and Evan Zimmerman

Keeping It Natural

Jarvis and his team worked hard to maintain a natural aesthetic for the sound of the show. “This is a big design concept of mine – the sound system is there to heighten it, and help people feel the sound wrap around, but because we’re in such small rooms, it really needs to work on its own, acoustically, and the mics are just there for support.

“For example, the Masquerade song – there are a few movements in the mix just based on the nature of it, a few lines here and there that we pop, but mostly we take the baseline that’s in the room, and we lift it.”

Many subtle sound design elements help create an atmospheric environment for the audience. A low, rolling drone that seems to come from everywhere at once is used to presage the Phantom’s arrival into a scene. The sounds of dripping water emanate from the dark corners of the underground lake environment. Chandelier beads rattle and glass smashes.

For a scene that takes place in the opera house’s fly loft, during a ballet performance on the stage below, approximately 50 loudspeakers are located beneath the floorboards and aimed downwards, creating the impression of the orchestra sound drifting up from beneath. As two actors engage in a dramatic fight, swinging from the rigging, with sandbags, backdrops and battens flying up and down, the sounds of the pulleys and rigging pipes banging and swelling in the rafters overhead are created by an additional 40 loudspeakers mounted near the ceiling and firing upwards.

From the perspective of an audience member, I felt that this design approach was successful at enhancing the experience and making the environments more believable and palpable, but never distracting from what the actors were doing.

Andy Henderson

When it comes to having the audience and the actors all together and moving around in small rooms, there is no “one size fits all” approach to timing. “The underground lake scene is a perfect example. There is timing in the system,” explains Jarvis, “but it’s not what you’d expect.

“I tried it both ways, and really scratched my head about this, the way the actors and audience flow through it – you have the whole room energized at once, and that room is all hard surfaces, it’s an echo chamber. You have the very loud, heavily orchestrated title song, and you want a tighter decay time in the room, to keep the articulation, but then in the same space we go right into ‘Music of the Night,’ this operatic aria, where you want more of a heavy rolling reverb in the room. And remember, there are six different casts, and they all sound and perform very differently.

“You have to rob Peter to pay Paul sometimes, to get the experience that you need. So we handle a lot of that electronically. But if you think about the timing… what are you timing to? Delay on one side of the room works for the audience there but creates a huge echo for the people on the other side. So you have to be creative with it. If you try to follow the traditional rules, you’ll drive yourself crazy.”